Duplicate, submitted URL not selected as canonical: HOW TO FIX?

For SEO, content duplication is one of the major problems many websites come across, and still, there is no indication of why this is happening even though everything seems okay.

Suppose you are unaware of the issue and are on this article. It seems you have not submitted your URL to Google Webmaster Tools, which digital marketing agencies and website design companies use to fix your website errors on Google Search Engines.

We advise every website owner to know How TO SUBMIT URLs in GOOGLE SEARCH ENGINES because this will help you see if your SEO Agencies are doing an excellent job.

If you search Google accordingly, it will say that somehow and somewhere, there is some duplication of URLs.

Well, this is right, but there can be things that you have not yet imagined. We are running a massive website with many complexities and functions that have been causing issues similar to those you must be facing. Still, our website is more complex, with lots of pages, and again, we have put together a team to figure out what has been wrong with our SEO.

Although our Search Engine Optimization team has 5-10 years of experience, they also couldn't have figured out the problem; now we have griped the real issue and are positioned to solve it.

We will go through straightforward steps so that you might be able to clean your website immediately.

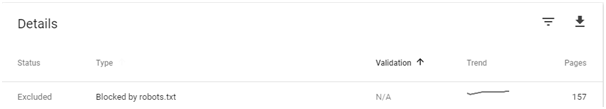

Robots.txt (if you want to know more about robots.txt, click here)

Try keeping it clean. One of the reasons for keeping it clean is that it states a mark on your search console, which annoys me. This is our opinion to keep it clean; otherwise, anything that is blocked by robots.txt will show something like this.

However, Google says this will not affect your SEO ranking or anything, But we saw that if we manage to fix this, your ranking will improve vastly, So this article will help you resolve this issue.

Try keeping only your website files in a particular directory and any other directory to make a subdomain.

Duplicate URL by Google

Duplicate content is content that has the same content on other pages. Duplicate content is tricky, but when search engines recently crawled many URLs with similar content, Google said it does not matter. Mueller of Google said

"if you had the same content in textual form on your website where it’s clearly duplicate content then what would happen there is we would pick one of those versions to show in Google Search.

It’s not the case that we would say: “oh this website has some duplicate content, we will not show it at all in Google.”Rather we will say: “There are two versions here. We will pick one of these to show and we will just not show the other one.”

Why is this so that duplicate content isn't affecting your ranking? Because many retailers use the same content, images, and descriptions on their websites. Secondly, if you have duplicate content, Google will prevent that page from crawling, resulting in a waste of unique content.

Finally, even if your content is going on a good ranking, search engines may pick the wrong URL as the "original," using canonicalization that helps you control your duplicate content.

The problem with URLs

You might be thinking, "Why would anyone duplicate a page?" and wrongly assuming that canonicalization isn't something you have to worry about is a wrong concept. Humans think a page is identical, but bots do not believe the same.

In search engine crawling, every unique URL is a separate page.

For example, search crawlers might be able to reach your homepage in all of the following ways:

- http://www.kabeeroptics.com

- https://www.kabeeroptics.com

- http://kabeeroptics.com

- http://kabeeroptics.com/index.php

- http://kabeeroptics.com/index.php?r...

This can only be resolved using 301 redirections to the one you set on the search console, such as https://webnetpk.com. All your URLs should be redirected to the one you have placed on the search console.

This is a problem that causes a lot of indexing issues, and a lot of companies require developers to do it. SEO needs a team; it is not a one-person (freelancer doing SEO) job.

The other way of doing this is using Canonicalization tags, which are required for all your pages. Of course, it is time-consuming, but it could be managed if you have fewer pages. However, if your pages are a lot, it should be resolved using 301 redirections.

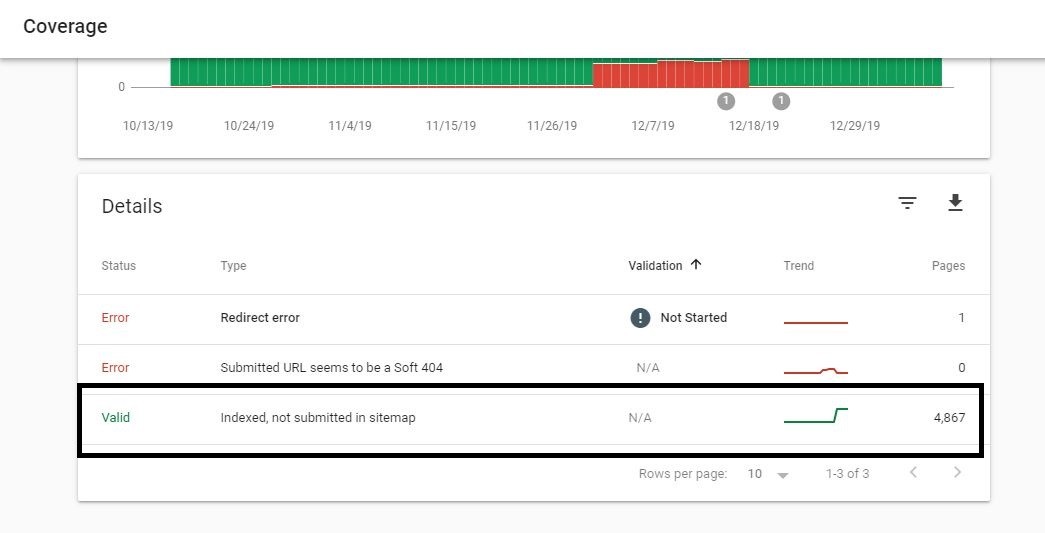

If you have 301 redirections, we still face the same: duplicate, submitted URL not selected as canonical.

The problem is that once all this is resolved, Google somehow gives us the exact error. Initially, the number of pages is usually less, but once it crawls, the number of pages increases. Are you still facing the same problem?

NO, WOW, you have RESOLVED it. But to understand and avoid the problems others face after fixing everything, please read this blog.

If your answer is YES, your solution to your problem is just 1 minute away.

The tricky part of putting a canonical tag is the URL you want Google to crawl. Many people don't know this, but this is very important; we will repeat it. The canonical URL is the URL address you want Google to crawl or is on the sitemap. Please allow Google some time; depending on when Google bots crawl, it will eventually resolve it immediately. If you see Google crawling your URL and your problem is still not fixed, please ensure you have the same URL on the sitemap.

Fixing Your Sitemap is very important, and it should be perfect. I hope you will have no problems now and if you do, you can ask us for help.

6 comment

Sabeen Sajid

Thank you so much. I was really stressed about this. We have tried every other page. But your solution have really worked out well. Just came back here to let everyone know.

Dr. Tahira Aleem

We need your help. Can you please help us.

Emily Bronte

I found this blog very informative, keep up the good work. Thank you for the opportunity..

Certified Personal Trainers in Canada

Great post! I am actually getting ready to across this information, is very helpful my friend. Also great blog here with all of the valuable information you have. Keep up the good work you are doing here.

Sakib

' or "1"="1" --

humayoun mussawar

Webnet Pakistan is genuinely making a remarkable impact on the business landscape. With their deep understanding of the local market dynamics and a keen eye for global trends, they are driving businesses to new heights. Their innovative strategies and creative campaigns are not only boosting brand visibility but also driving engagement and conversions.